AI is often presented as a tool for progress, efficiency, and innovation. While those benefits are real, they come with a parallel set of risks that are frequently underestimated or misunderstood. AI systems influence decisions about people, finances, access to services, and security. When these systems fail, or are misused, the consequences can be significant.

Understanding the risks of Artificial Intelligence is about using these tools responsibly, with a clear view of its limitations and potential for harm.

Bias and discrimination

One of the most widely discussed risks in AI is bias. Machine learning systems learn from data. If that data reflects historical inequalities or imbalances, the model will reproduce them. This can lead to hiring systems favouring certain demographics, credit scoring models disadvantaging specific groups, and facial recognition systems performing unevenly across populations.

While the system doesn’t intentionally discriminates, it replicates patterns embedded in the data. Because these systems operate at scale, even small biases can affect large numbers of people.

The black box problem

Many modern AI systems, particularly deep learning models, are difficult to interpret. This is often referred to as the black box problem.

Inputs go in, predictions come out. The internal reasoning is not easily explainable.

This creates challenges in high-stakes environments: why was a loan denied? Why was a medical condition flagged or missed? Why was a transaction blocked? Efforts to address this issue fall under Explainable AI, which aims to make model behaviour more transparent.

However, there is often a trade-off between performance and interpret-ability.

Privacy and surveillance

AI systems rely heavily on data, much of which is personal. This creates a thin line between innovation and privacy. For example, tracking user behaviour for targeted advertising, analyzing communication patterns, or monitoring physical movement through cameras and sensors

Large-scale surveillance systems have raised global concerns, particularly when deployed without transparency or consent. Regulatory frameworks such as the General Data Protection Regulation attempt to address these risks by enforcing data protection and user rights.

However, enforcement varies, and technology often evolves faster than regulation.

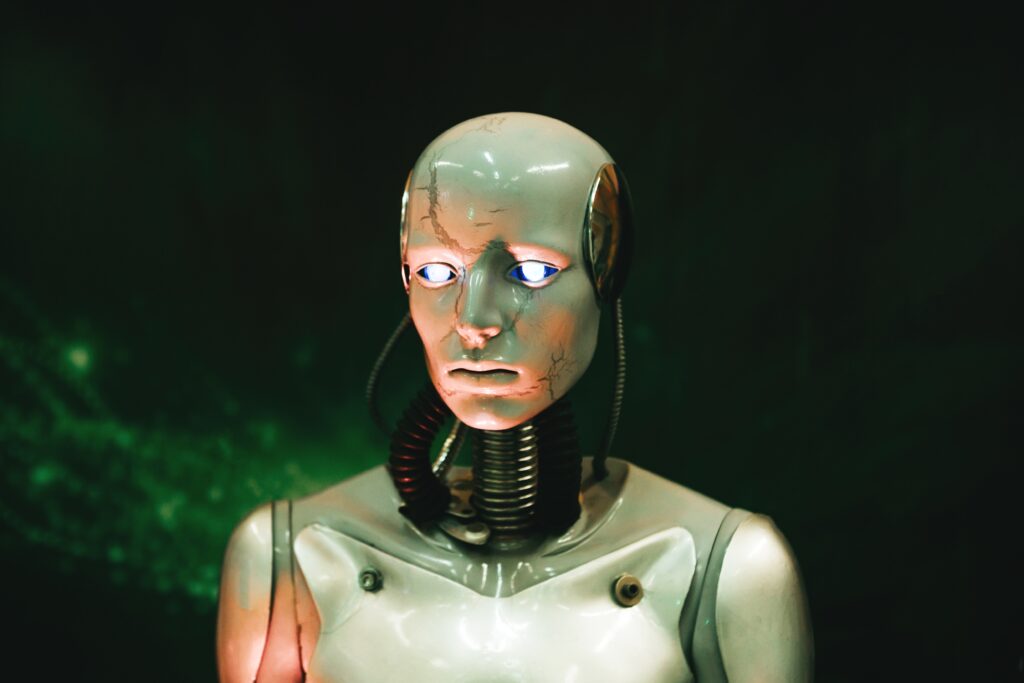

Misinformation and synthetic content

AI has made it significantly easier to generate realistic content. This includes text, images, audio, and video. Tools based on architectures like the Transformer (deep learning architecture) can produce highly convincing outputs.

One major concern is the rise of Deepfake technology. Deepfakes can impersonate individuals, spread false information, and manipulate public perception. The barrier to creating such content has dropped dramatically, increasing the risk of large-scale misinformation campaigns.

Security risks and adversarial attacks

- Adversarial Input: carefully crafted inputs can cause AI systems to make incorrect predictions. For example, slightly altered images that fool recognition systems, and manipulated text that bypasses filters.

- Data poisoning: attackers may inject malicious data into training datasets to influence model behaviour. This can degrade performance or introduce targeted weaknesses.

- Model Extraction and Inversion: attackers can reverse-engineer models, extract sensitive information from them, and reconstruct training data in some cases. These risks are particularly relevant in systems exposed through APIs.

Automation and accountability

AI systems are often used to automate decision-making. This raises a critical question: who is responsible when something goes wrong?

Is it developer who built the model? Or the organization deploying it? Or maybe the operator using it? In practice, accountability is often unclear. This becomes especially problematic in financial decisions, healthcare diagnostics, law enforcement applications, or self-driving vehicles.

Without clear accountability, errors can be difficult to challenge or correct.

Economic and social impact

AI is reshaping labour markets and economic structures. It’s already impacting the automation of repetitive tasks, the displacement of certain job categories, and the creation of new roles requiring advanced technical skills.

While AI can increase productivity, it may also widen economic inequality if benefits are unevenly distributed.

Over-reliance on AI systems

As AI systems become more accurate, there is a risk of over-reliance. Users may trust outputs without verification, assume correctness due to automation, or reduce critical oversight.

This can lead to systemic failures when models encounter unexpected conditions. AI should support decision-making, not replace human judgement.

The ethical challenge

At its core, AI ethics is about balancing competing priorities: innovation vs safety, efficiency vs fairness, automation vs accountability, and data use vs privacy.

There is no universal solution. Different organizations and societies will make different trade-offs. However, ignoring these questions is not a viable option.

Towards responsible AI

Addressing AI risks requires a combination of technical, organizational, and regulatory approaches. Key principles should include transparency in how systems operate, accountability for outcomes, robust data governance, continuous monitoring and auditing, and security by design. AI systems should be treated as critical infrastructure, not just software components.

In the final article of this series, we will explore the future of AI. We will examine emerging trends, new technologies, and the long-term implications of increasingly intelligent systems.